1000Base-T is so 2005 - but 10GbE is cheaper than you think

I’ve got a fileserver with two ZFS pools on it. Many terabytes of storage and much more reliable than individual disks. Single drives aren’t fast or reliable and I can’t fit all my Steam games on my RAID0 SSD pair. But the fileserver can read and write at close to 500MB/second. However, it’s on the other end of a gigabit ethernet connection, so it tops out at 120MB/sec - barely faster than a normal HDD. So what can we do? Isn’t 10GbE still crazy expensive?

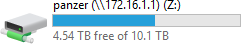

I ended up with a drive letter on my desktop mapped to a SMB share on the fileserver and (most) Windows apps treat it no differently to a local drive. With a Steam library on it, I’m not going to be short on space for a while. But every time I go to load something, it’s bottlenecked by the gigabit connection.

Then I realised that a good proportion of the 802.11ac hardware out there supports 1300mbit or higher. So upon realising that my wireless network was actually (in theory) faster, something had to be done.

And I mean… 1000BaseT was ratified in 1999. Nothing wrong with that, but maybe it’s time to move on. So here’s how I solved this problem for less than $150 for the entire setup!

What are the options?

The first thing people would think of 10GBase-T. 10 gigabits on 55m of existing Cat6 copper cable. The cheapest I’ve found these for in the used market - is around $200 per card. New, you’re looking at least $500 per card. This is probably the way you’d want to go if you have existing in-wall cabling but there are certainly cheaper options.

The NBaseT standard was recently announced and it looks promising. This provides 2.5GBase-T and 5GBase-T, which will provide a nice speed boost for the home user that doesn’t need 10Gbit but wants something faster than existing gigabit. But you’ll notice I’m saying “will” here - this is a brand new standard and no commercial products exist yet (at the time I’m writing this). If you’re reading this a few years in the future, this might be a good option. But right now, there’s nothing, and prices for promised hardware don’t look that much cheaper than second hand 10GbaseT gear.

There’s other non ethernet options, like Infiniband - which can be configured to run IP over Infiniband. However, this is CPU intensive due to a lack of optimisation, isn’t well supported on modern OSes. Additionally, a lot of the inexpensive hardware doesn’t have driver support on newer Windows versions.

Fibre Channel hardware is also plentiful and cheap - I’ve actually already got three dual-port 4Gbit PCIe cards. While the operation of Fibre Channel itself is close to SCSI over fibre, they can in theory be configured to do IP over Fibre Channel. Support for this, however, appears to have completely vanished from the Linux kernel and hasn’t been supported on Windows for a long time. I’ve also heard it doesn’t perform well even if it does work.

10Gbase-CX4 hardware is also available. This runs over the same CX4 copper cables that InfiniBand uses. However, use of this standard became less common after alternatives were developed meaning some of the available hardware isn’t well supported on modern Windows versions. These cards are still avaliable if you can make them work but even if you do, the maximum cable length is 15m. The switches are fairly cheap, so if you can make the cards work and all your machines aren’t too far apart, this might be fine.

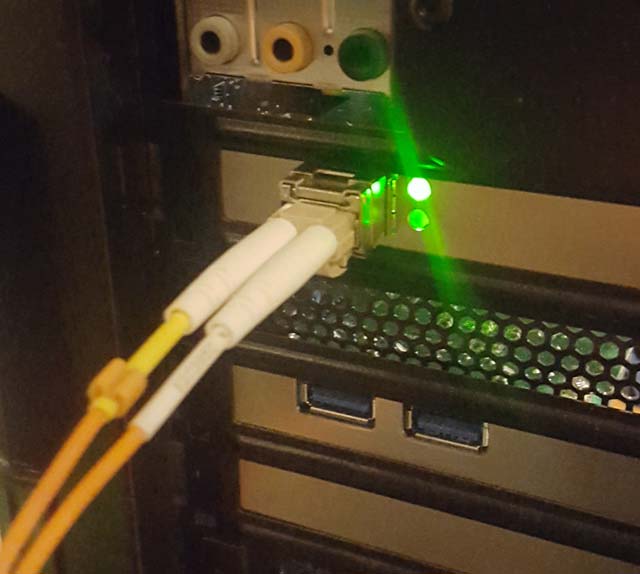

But I’ve saved the best until last: 10Gbase cards with SFP+ slots are cheap, widely available and still decently well supported. I picked up a pair of Mellanox ConnectX-2 cards on eBay for about AU$70 including shipping. They’re supported on Linux and they’re supported by inbuilt drivers on Windows 10. Mellanox’s current WinOF drivers even still support them (their website doesn’t list them on the supported list - but I installed the drivers anyway and the card is functioning fine using them. Make sure you install WinOF not WinOF-2.).

Keep in mind that this is a PCIe x8 card. My desktop is LGA2011, so the motherboard has four full length PCIe slots, but in the fileserver which is LGA1150, it’s in the slot you’d normally put a GPU. Fortunately, that board has onboard graphics, so one is not needed.

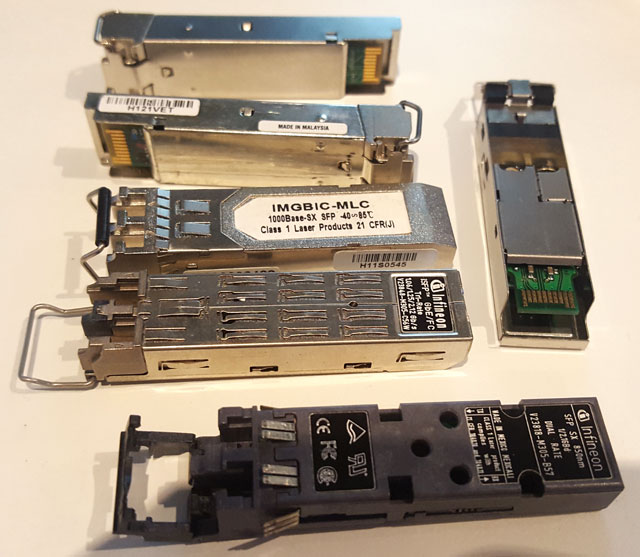

What is a SFP?

SFP stands for “small form factor pluggable”. It’s a standard for a small transciever - basically an adaptor between your network card and desired cabling medium. Although it was created with optical fibre transcievers in mind, there are SFP modules for copper based standards like 1000Base-T, 10GBase-T and even VDSL. A standard SFP supports gigabit speeds, but the later SFP+ standard adds support for 10 gigabit speeds.

There’s many different types of SFP and all of them look externally similar - so make sure you buy the right one. With the Mellanox card above, you’re most likely after a 10GBase-SR SFP+ module.

Pictured: a small handful of SFPs of varying brands. Mostly 1000Base-SX, some 1/2Gbit Fibre Channel.

Can’t we just plug them directly together?

You may not actually need SFPs or fibre after all. If your fileserver and desktop are close together, there’s a cheaper option. SFP+ Direct Attach cables are basically a cable with a SFP on both ends. The ones in 1m to 5m lengths are usually passive cables.

Longer lengths will likely be active cables. Some are copper-based, some are optical. I’d generally avoid these as they’re often not cheaper than inexpensive SFPs and a length of fibre. And if you’re buying optical ones - you’re basically buying two SFPs with non-removable fibre anyway. Also, make sure they are SFP+ not just SFP, otherwise they will not work at 10Gbit.

More fibre in your networking diet?

So what is a fibre optic cable? In short, a laser shines down a piece of glass fibre and switches on and off to send data. In single mode fibre, the laser shines directly down the middle of the fibre. In multimode fibre, it bounces off the edges as it travels. Single mode fibre achieves longer distances but requires much more precise and expensive optics. Multimode will be fine for our application, as when we say “longer distances” in fibre optics, we’re talking kilometres.

The outside of the fibre is also typically enclosed in plastic insulation, like an ordinary copper cable. The diameter of the actual fibre is miniscule - about the size of a human hair. This outer sleeve protects the fragile glass fibre from being damaged.

There’s also a minimum bend radius of about 15cm. Bending the cable tighter than this won’t immediately damage it - but it may reduce performance by increasing the amount of light that escapes the fibre. I certainly wouldn’t fold it directly over but it’s no more fragile than a normal copper based cable.

Twisted pair ethernet comes in multiple categories such as Cat5, Cat5e, Cat6 and so on, with each increasing category being able to handle higher bandwidth signals. Multi-mode fibre is similar. These are designated OM1 to OM4, with OM1 being the lowest quality. OM1 and OM2 are typically found in an orange coloured cable, with OM3 and OM4 typically in an aqua coloured cable.

For 10Gbase-SR, the cheap OM1 cables will work up to about 30m. OM2 will give you about 80m. OM3 and 4 were designed for 10 gigabit applications so will provide 300m and 400m respectively. If 33m is enough, by all means go for the cheap stuff. A 15m run will cost about $20.

Unlike twisted pair, though, there are different types of connector. For nearly every SFP out there, you want a LC connector on both ends. If you’re connecting two cards directly together, you can swap the two fibres around on one end by pulling apart the connector.

Just a quick word on safety - don’t look into the optical end of the SFP, and don’t look into the end of the fibre. With most short range optics like the 10Gbase-SR previously mentioned, they’re probably not powerful enough to cause eye damage, but it pays to be safe. Long range optics for single mode fibre can and will damage your eyes - and since they’re infrared, the first you’ll see of the damage is a hole in your retina.

I had the fibre lying around (it was with a bunch of parts that I was given) - so I don’t know what it cost, but some eBay searching shows it would run about $30, so I’m going to say $150 all up.

Topology

I went for a direct connection between my fileserver and my desktop, not connected to the rest of the network. This is the simplest way of doing it as it doesn’t require any compatible switch. I’ve had a look around and there’s a few options, but none are particularly cheap. Mikrotik’s range has a few interesting options such as the CSS326-24G-2S+RM with two 10Gbit ports and 24 1Gbit ports, or the CRS317-1G-16S+RM with 16 10Gbit ports and one 1Gbit port. Unfortunately there’s nothing in the product line with more than two but less than 16, and no other vendor has much in this price range.

Whether having just two machines on the 10Gbit side is worth it or not is up to you. If your model is one server and many clients, it’ll be worth it, but my use case is transfer between two machines, so it’s a little less useful.

Broken Windows

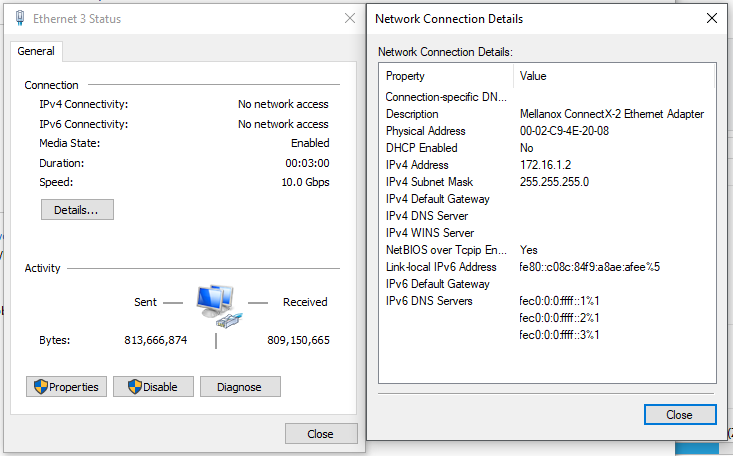

For now, the fileserver has just kept its existing 1GBaseT connection to the rest of the network, and the 10 gigabit link’s just point to point. Configure static IPs on both ends, bring links up, and connect to the fileserver by IP (using the hostname will resolve it on the normal network).

The fileserver’s running Linux, and my desktop’s running Windows 10. Editing /etc/network/interfaces on the Linux side, and network properties on the Windows side later… we run into two problems here, both on the Windows side.

The first is that Windows wants to figure out whether all networks are public or private. The trouble is that Windows can’t figure out which one our point to point link is, as it has no DHCP server, static IPs, no gateway and no other computers. So it silently firewalls all traffic from it. I’d define it as a private network, but the button is simply missing - and it didn’t even ask.

The solution involves using gpedit.msc to make Windows see unknown networks as private ones. I’m not sure this is the best solution, but I haven’t found another one that works.

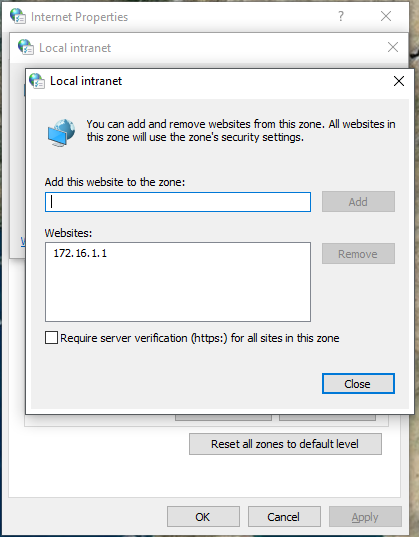

Then Windows complains every time I copy a file from the server. Turns out for some reason when you connect to a SMB share with an IP address, it’s outside the “trust zone” and is possibly dangerous. An exception to this can be made, and we’re good again.

And here we go!

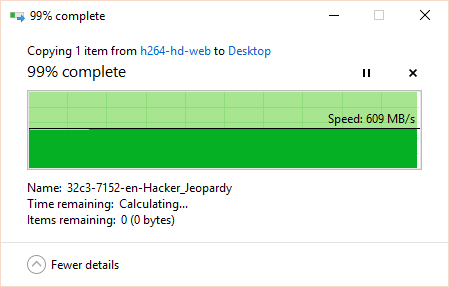

Can’t argue with that…